Most teams do not fail because they lack automation. They fail because their automation is fragile, undocumented, and impossible to trust at 2 a.m.

If your automation estate looks like a folder of one-off scripts, you probably have speed in the small and chaos in the large. You get quick wins, but every new script becomes another thing that breaks silently, runs with too much privilege, or only works on one laptop.

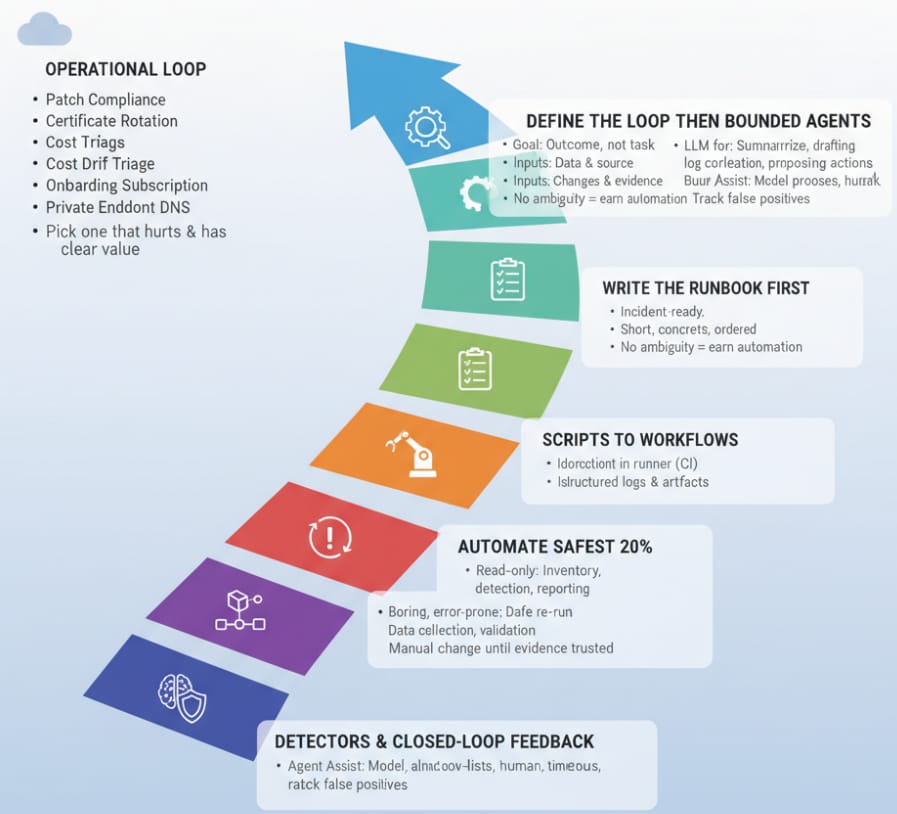

This roadmap gives you a simple maturity ladder to move from scripts to reliable workflows, to agentic operations, without turning your platform into a brake pedal.

TL; DR

· Scripts are fine for discovery and quick wins, but they are a weak long-term operating model.

· Workflows are where repeatability, evidence, approvals, and rollback live. This is where real scale shows up.

· Agentic operations can reduce toil and shorten triage time, but only after you have a truth layer (telemetry + inventory) and hard safety boundaries.

· A runbook is the bridge: write the checklist first, then automate one step at a time.

The three stages in plain language

1) Scripts: fast, local, and easy to break

· Great for: proving a concept, gathering data, or doing a one-time clean-up.

· Risk: hidden assumptions (paths, permissions, environment), no guardrails, and no audit trail.

· Smell test: if a person has to remember how to run it, it is not a system.

2) Workflows: repeatable operations with guardrails

· Great for: any task you expect to run more than once, especially in production.

· Core traits: versioned inputs, idempotent steps, logs, retries, approvals, and evidence capture.

· Smell test: if it cannot be re-run safely, it is not a workflow.

3) Agentic operations: goal-driven assistance with bounded action

· Great for: triage, drafting change plans, correlating signals, and proposing safe actions.

· Risk: confident mistakes. The bigger the blast radius, the tighter the boundaries must be.

· Smell test: if the agent can change production without a hard constraint, you are gambling.

The maturity ladder

Think of this as a set of rungs you can climb. You do not need to jump straight to the top. The payoff comes from moving your most important operational loops from brittle to repeatable.

Level | What it is | What you gain | Non-negotiables |

0. Manual runbook | Human follows a checklist | Consistent steps, clear owners | Runbook lives in a shared system, not a wiki graveyard |

1. Copy-paste scripts | Single script run by a person | Speed for one-off tasks | Script is in version control, not a desktop |

2. Standard scripts | Parameter-driven scripts with modules | Reuse and fewer surprises | Linting, unit checks where possible, and sane defaults |

3. Workflow runner | Orchestrated steps with logs | Repeatability and visibility | Idempotency, retries, structured logging |

4. Guarded workflows | Approvals and policy checks | Safe production changes | Least privilege, change gates, evidence artifacts |

5. Signal-driven workflows | Detectors trigger action | Lower MTTR and less toil | Alert quality, suppression, and safe backoff |

6. Agent assist | LLM drafts plan and queries data | Faster diagnosis, better runbooks | Truth layer, source citations, human approval |

7. Agentic ops (bounded) | Agent executes within constraints | Self-service operations at scale | Hard boundaries, rollback, and continuous evaluation |

Roadmap: how to climb without breaking production

Pick one operational loop that hurts and has clear value. Examples: patch compliance, certificate rotation, cost drift triage, onboarding a subscription, or private endpoint DNS onboarding.

Step 1: Define the loop like a product

· Goal: what outcome are you trying to achieve (not the task).

· Inputs: what data you need and where it comes from.

· Outputs: what changes in the world and what evidence is produced.

· Owners: who approve, who execute, and who is on-call when it fails.

Step 2: Write the runbook first

Write the checklist the way you would want it during an incident. Keep it short, concrete, and ordered. If a step is ambiguous, you have not earned automation yet.

Step 3: Automate the safest 20 percent

· Start with read-only: inventory, detection, and reporting.

· Automate the parts that are boring and error-prone: data collection and validation.

· Keep the change step manual until your evidence is trusted.

Step 4: Move from scripts to workflows

· Put execution in a runner (CI, scheduled job, or orchestration platform).

· Make steps idempotent. Re-running should be safe.

· Emit structured logs and keep artifacts (inputs, outputs, diffs).

· Add gates: approvals for high-impact changes, policy checks for baseline compliance.

Step 5: Add detectors and closed-loop feedback

· Trigger automation from signals, not from calendar time when possible.

· Add backoff and circuit breakers to avoid repeat failures.

· Track false positives. Bad alerts destroy trust.

Step 6: Introduce agent assist, then bounded agents

Use an LLM where it is strong: summarizing incidents, drafting runbooks, correlating logs, and proposing safe next actions. Do not use it as the system of record. Your platform telemetry, inventory, and policy state are the truth.

· Agent assist: the model proposes. A human approves.

· Bounded agents: the model can act, but only inside a strict sandbox with allow-lists, timeouts, and rollback paths.

· High-blast-radius actions stay gated until you have months of evidence that the loop is stable.

Are you ready for agentic operations?

If you cannot answer yes to most of these, stay in workflows a bit longer.

· We have a reliable inventory of resources and owners (tags, CMDB, or both).

· We can explain our approval model in one sentence (who can change what, when).

· We have a place where evidence lives (logs, tickets, run artifacts) and it is easy to find.

· We can roll back the top three automation actions we want an agent to take.

· We have alert quality under control (paging is meaningful, noise is low).

· Secrets are handled safely (no hard-coded keys, short-lived access where possible).

· The blast radius of each action is explicitly bounded (scope, rate limits, allow-lists).

· We test changes in a safe environment that resembles production.

· We track outcomes with metrics (toil hours, MTTR, change failure rate).

· We treat automation like a product with owners, backlog, and maintenance.

Common failure modes and how to avoid them

Script sprawl: Create a single repo, enforce code review, and delete or replace one-offs.

Automation that only works for the author: Standardize inputs and run in a shared runner, not a personal machine.

No evidence: Log everything, keep artifacts, and tie changes to tickets.

Too much privilege: Use least privilege, scope to the smallest target, and rotate credentials.

Agent hype: Treat LLMs as drafting and triage helpers first. Earn autonomy with data.

Example: cost drift triage as a ladder climb

Here is what one operational loop can look like as you climb. This is intentionally generic so you can map it to your tooling.

1. Manual: weekly review meeting, someone screenshots dashboards.

2. Scripts: a script exports spend and top drivers for a scope.

3. Workflow runner: scheduled job posts a summary and stores artifacts.

4. Guarded workflow: for obvious waste, open a ticket and propose the change with a rollback note.

5. Signal-driven: anomaly signal triggers the workflow and pages only when thresholds and confidence are met.

6. Agent assist: LLM summarizes changes, pulls relevant runbook steps, and drafts the ticket comment with links to evidence.

7. Bounded agent: within allow-lists, the agent can stop non-production resources that meet strict criteria, then post evidence and open a follow-up ticket.

Closing thought: You do not need more automation. You need fewer, better automations that you can trust. Write the runbook, build the workflow, then earn the agent.

Want the one-page ladder and scoring rubric I use to assess an automation estate?

Grab it here! Compliments of CloudLoom Studio.

It includes: a maturity scorecard, a runbook-first checklist, and a roadmap worksheet you can use to prioritize the next three loops to automate.